Agentic AI in banking is ready. Most lending infrastructure isn't.

Many financial institutions are leveraging autonomous AI agents across multiple areas of their business, including customer service, internal operations, and relationship management.

But, agentic AI adoption in lending is moving at a slower pace. Why? Because lending sits at the intersection of the highest financial risk and the strictest regulatory scrutiny. Every automated decision affects a borrower’s access to credit, and every action must stand up to audit, explainability, and fair treatment standards.

Many lenders remain cautious of infrastructure and compliance concerns. However, compliance may actually become a competitive advantage for lenders that develop the right compliant infrastructure to move faster.

Agentic AI in banking is no longer experimental

Large financial institutions have already moved beyond proofs of concept. In fact, more than half of financial institutions are already deploying or implementing generative AI, with adoption exceeding 75 percent among the largest banks, according to ABA Banking Journal.

Autonomous agents now handle customer inquiries, assist relationship managers, and orchestrate internal workflows at scale. This shows that agentic AI can be used in a regulated environment. The question for lenders is how to deploy agents in ways that maintain control, transparency, and policy enforcement.

Why lending is uniquely regulated in an agentic AI world

Not all banking functions carry the same regulatory weight. Wealth management recommendations, internal productivity tools, and customer support automation are subject to oversight, but lending operates under a uniquely dense framework of rules and expectations.

Every loan approval, denial, pricing adjustment, or collections action is subject to fair lending law, consumer protection standards, and ongoing supervisory review. Regulators expect lenders to demonstrate not only that decisions were consistent with policy, but that they were applied consistently across protected classes and across time.

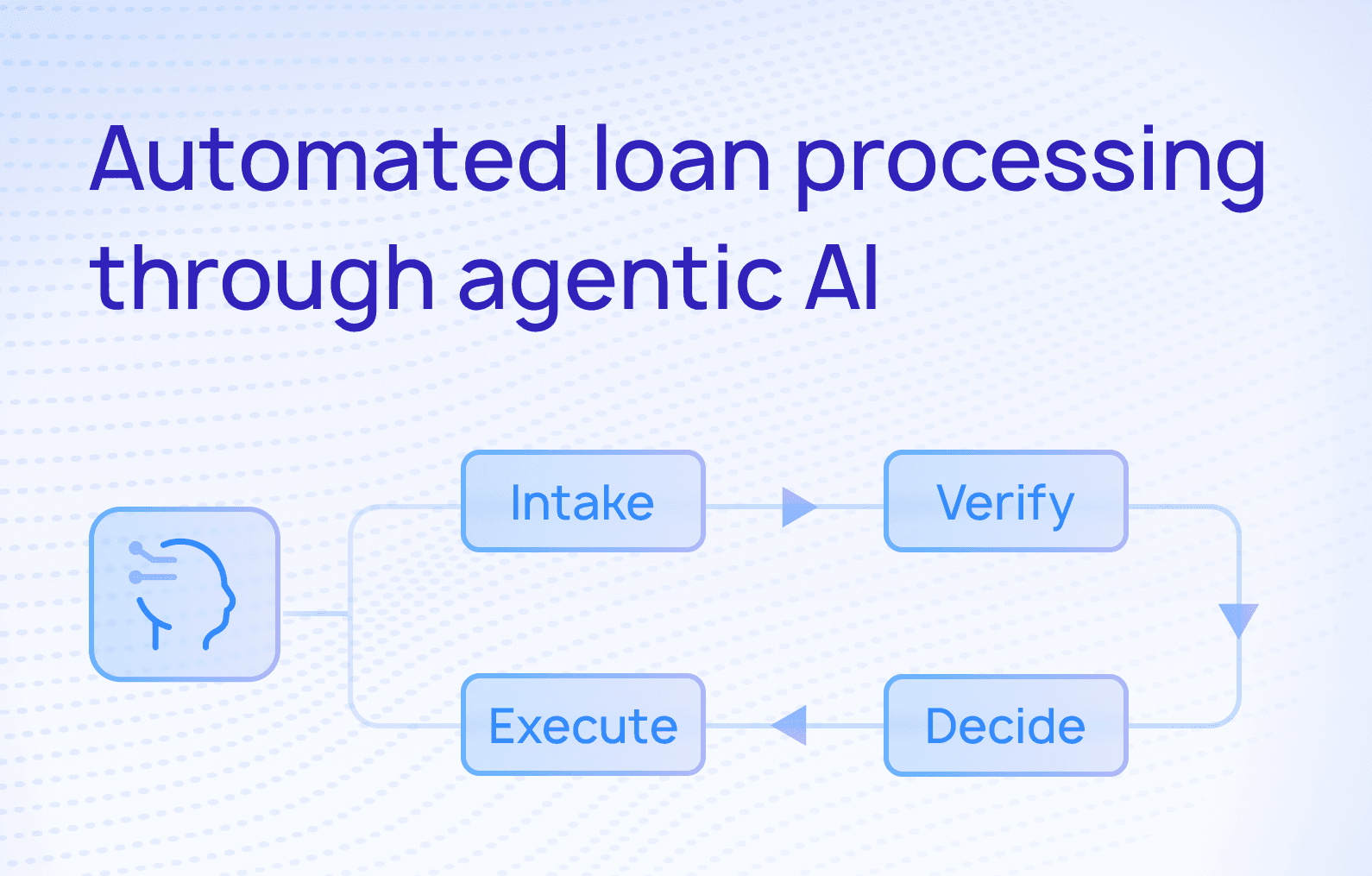

This is where agentic AI changes the equation in lending. An autonomous agent is not just generating content or surfacing recommendations. It is taking actions that have legal and financial consequences. If an agent modifies loan terms or initiates a collections workflow, that action becomes part of the lender’s official record and must be explainable, defensible, and reproducible.

Agentic AI compliance must be a core design requirement. Systems that were built for manual workflows and after-the-fact review will struggle to provide the level of traceability and control that autonomous agents require.

Agentic AI risk management in lending

Banks are already familiar with managing model risk, data privacy, and cybersecurity. Agentic AI introduces a different layer of risk in lending: the risk of autonomous decision execution.

In lending, risk management is about whether every automated action aligns with documented credit policy, pricing rules, and regulatory obligations. An agent that approves a loan outside of policy or communicates terms in a way that could be interpreted as misleading creates exposure that is both financial and legal.

This means agentic AI risk management in lending must address three dimensions simultaneously:

- Policy adherence: Agents must operate within clearly defined credit and servicing rules that can be updated as regulations or business strategy changes.

- Explainability: Lenders must be able to articulate why a specific decision was made, using language and logic that regulators and auditors can understand.

- Decision auditability: Every action taken by an agent must be logged, timestamped, and tied to the data and rules that informed it.

Without these capabilities, agentic AI introduces more uncertainty than efficiency. With them, it becomes a controllable extension of existing risk frameworks.

Auditability, fair lending, and UDAAP

In lending, an action without an audit trail is more than incomplete. It is a compliance liability.

Fair lending laws require lenders to demonstrate that similarly situated applicants are treated consistently. That means being able to reconstruct how a decision was reached, what data was used, and how policies were applied. If an autonomous agent denies a loan and the lender cannot clearly explain the reasoning or reproduce the decision path, that gap becomes a regulatory risk.

The same applies to Unfair, Deceptive, or Abusive Acts or Practices (UDAAP) standards. Communications and actions taken by agents must be fair, transparent, and not misleading. An agent that negotiates payment plans, adjusts fees, or sends borrower communications is effectively acting as a representative of the institution. Its behavior must therefore be constrained by the same guardrails that apply to human employees.

These requirements shape how systems must be architected to capture the full lifecycle of each decision, from input data through rule evaluation to final action.

Building compliant agentic AI in banking

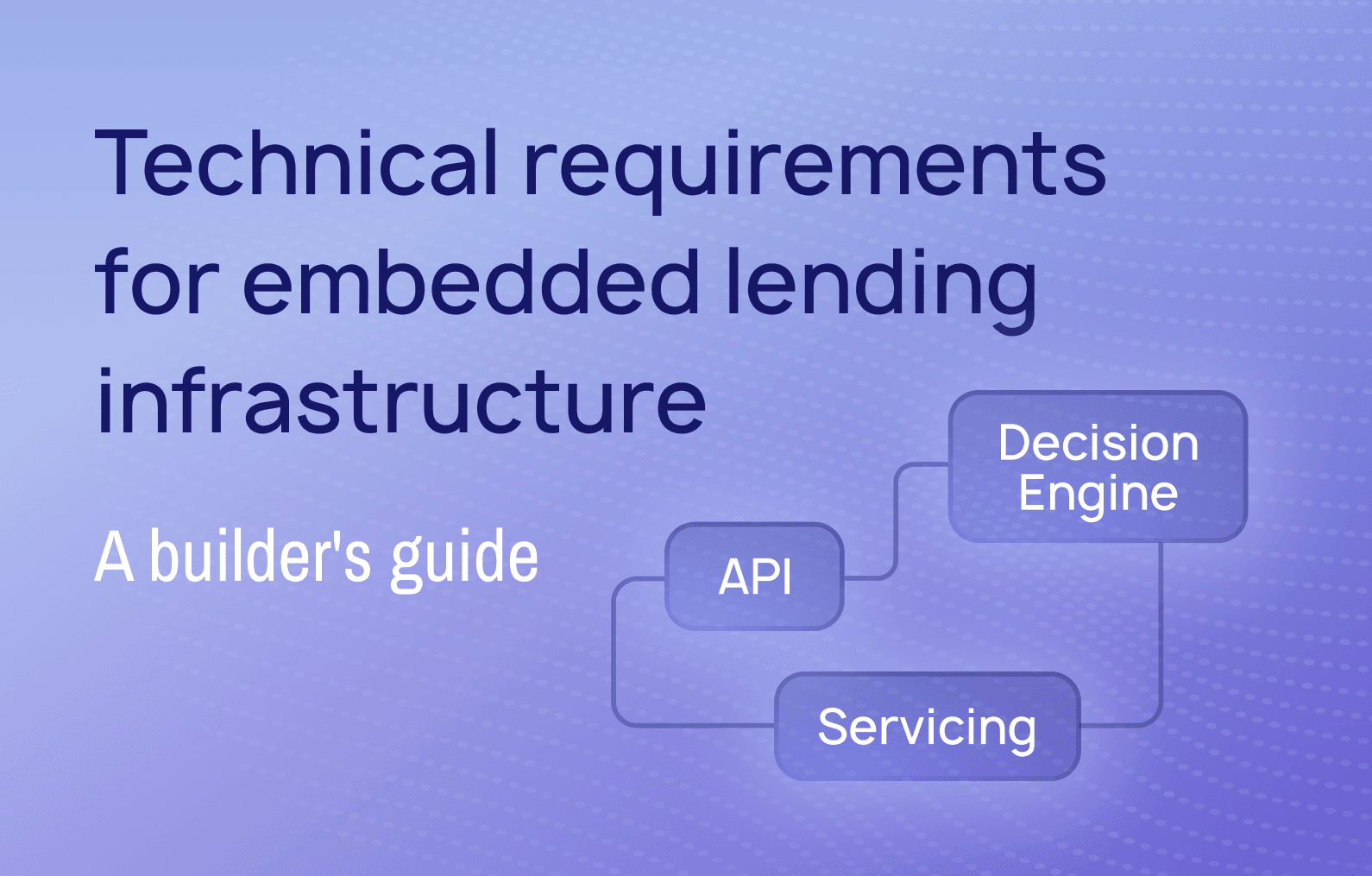

The institutions that successfully deploy agentic AI in lending run agents inside systems where regulatory controls, policy engines, and structured data are already part of the core platform.

Compliance-ready agentic AI looks different from the current pattern of bolting generative models onto legacy workflows. Instead of agents improvising actions against loosely structured data, they operate within clearly defined objects such as accounts, loans, payment schedules, and credit policies. Every automated loan processing action is validated against business rules before it is executed, and every decision is recorded in a way that supports downstream audit and reporting.

As Colin Terry, Chief Product Officer at LoanPro, puts it, “Financial organizations want to innovate with AI, but they can’t afford to compromise compliance or control.”

Innovation in lending will be led by the institutions that deploy the most advanced agentic AI models with a compliant, governed environment in which to operate.

This is where infrastructure becomes the differentiator. Platforms designed to treat lending as a system of record with configurable rules, event-level logging, and full data lineage create the conditions in which autonomous agents can act safely and at scale.

How lenders can move forward without compromising compliance

Lenders need clarity about whether their current stack can support autonomous decision-making without introducing new regulatory exposure. That evaluation starts with a few practical questions:

- Can the platform produce a complete audit trail for every automated decision and action?

- Are credit policies, pricing rules, and servicing workflows configurable in ways that an agent can reliably follow without hard-coded exceptions?

- Is decision logic centralized and versioned, or scattered across custom code and manual processes that are difficult to trace?

If the answer to these questions is unclear, deploying agentic AI on top of the existing stack will likely increase risk rather than reduce cost or cycle time.

Agentic AI has already proven that it can operate in regulated banking environments. For lenders to follow suit, they need to invest in infrastructure that turns compliance from a constraint into an enabler.

Ready to see what a compliant-ready AI platform looks like?

Learn how LoanPro is enabling agentic AI through its MCP framework.